The Shadow of Violence: Anti-AI Groups Under Scrutiny After Altman Attack

The recent attempted firebombing of OpenAI CEO Sam Altman’s San Francisco residence, allegedly perpetrated by 20-year-old Daniel Moreno-Gama, has cast an uncomfortable spotlight on two prominent anti-AI organizations: Pause AI and Stop AI. While both groups have swiftly condemned the violence and disavowed any connection to the suspect, the incident has ignited a crucial conversation about the fine line between passionate advocacy and the potential for radicalization within movements critical of artificial intelligence.

Unpacking the Incident

Last Friday’s events were alarming. Beyond the alleged firebombing attempt at Altman’s home, Moreno-Gama reportedly proceeded to OpenAI’s headquarters, where he attempted to smash glass doors with a chair and threatened to set the facility ablaze. This escalation of aggression immediately triggered investigations, revealing Moreno-Gama’s activity on Pause AI’s Discord server and drawing renewed attention to Stop AI’s previous direct actions against OpenAI.

Pause AI: Advocating for a Measured Approach

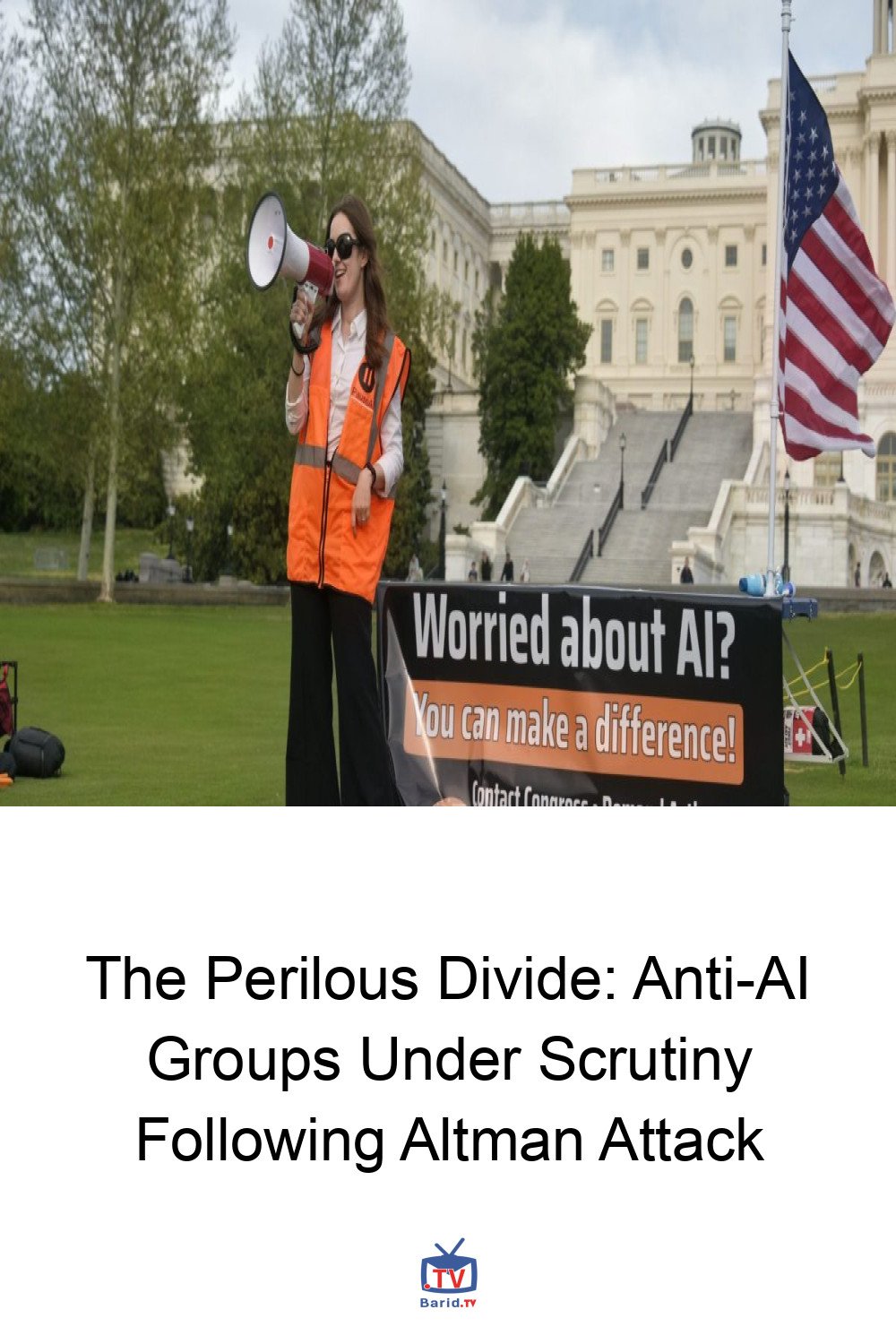

Founded in May 2023 in Utrecht, Netherlands, by Joep Meindertsma, Pause AI emerged with a clear mission: to halt what it terms “dangerous frontier AI.” Inspired by a March 2023 open letter from the Future of Life Institute (now its primary funder), the group has burgeoned into a global grassroots movement with numerous local chapters, including Pause AI US, led by Berkeley-based Holly Elmore. Elmore, a Harvard-educated evolutionary biologist, emphasizes the group’s commitment to peaceful, democratic change.

Despite their non-violent stance, Moreno-Gama’s digital footprint linked him to Pause AI’s Discord server. A post from him, dated December 3, 2025, ominously read: “We are close to midnight, it’s time to actually act.” Pause AI confirmed his presence on the server two years prior, noting he had posted 34 messages, none of which contained “explicit calls to violence.”

Leadership’s Stance on Violence

Holly Elmore recounted her shock upon landing in Washington, D.C., last week, where she was preparing for a peaceful Capitol Hill demonstration. “When I landed, suddenly I was getting these questions about somebody who had attacked Sam Altman’s house,” she told Fortune. Elmore firmly asserted that Pause AI has “no reason to think that this person had much to do with us,” reiterating the group’s explicit prohibition of violence. She clarified that Moreno-Gama’s activity was on a public, global Discord server, separate from Pause AI US’s official organizing channels, and that he held no official role within the organization. Pause AI, she added, diligently vets volunteers to prevent association with extreme views.

The ‘Doom’ Debate: Raising the Stakes

However, Nirit Weiss-Blatt, an independent researcher and author of the AI Panic newsletter, highlights a potential undercurrent. She points to a 2024 documentary, “Near Midnight in Suicide City,” where Elmore is seen holding a sign proclaiming, “Humanity can’t survive smarter-than-human AI.” Weiss-Blatt suggests that while Elmore never advocates violence, her rhetoric, like that of other “AI doomers” such as Eliezer Yudkowsky, raises the stakes to an urgent, even apocalyptic, level. “When prominent AI doomers… keep insisting that human extinction is imminent, it should not be surprising when someone is driven to extreme action,” Weiss-Blatt commented. “Young, anxious followers, looking for purpose, can be radicalized by apocalyptic AI rhetoric, even without explicit calls for violence.”

Stop AI: A More Confrontational Path Emerges

The incident at Altman’s home also resurfaced past controversies involving Stop AI, a group with a more confrontational history. This organization was co-founded in 2024 by Sam Kirchner and Guido Reichstadter, both of whom had previously been involved with Pause AI.

A Split Over Tactics

The divergence between Pause AI and Stop AI stemmed from fundamental disagreements over tactics. Holly Elmore confirmed, “I kicked them out,” indicating a clear split. This separation underscores the broader challenge within anti-AI movements: how to effectively advocate for caution without crossing into extremism. Five months prior to the Altman attack, OpenAI employees were ordered to shelter in place after Kirchner allegedly threatened to “murder people” at several OpenAI offices in San Francisco, according to police reports.

Navigating the Extremes: Expert Perspectives

Mauro Lubrano, a lecturer at the University of Bath and author of “Stop the Machines: The Rise of Anti-Technology Extremism,” cautions against broad generalizations. He emphasizes the critical distinction between groups advocating for regulation or a pause in AI development and those that seek to violently eradicate technology. “I think it’s easy to conflate all of these groups and movements that are trying to raise awareness of some of the dangers of AI,” he noted, urging a nuanced understanding of the diverse motivations and methods within the anti-AI landscape.

Conclusion

The alleged attack on Sam Altman has undeniably intensified scrutiny on anti-AI movements. While groups like Pause AI strive for democratic change through peaceful means, the incident serves as a stark reminder of the potential for radicalization when apocalyptic rhetoric meets anxious individuals. The challenge for these movements, and indeed for society, lies in fostering critical dialogue about AI’s future while unequivocally rejecting violence and ensuring that the pursuit of safety does not inadvertently fuel extremism.

For more details, visit our website.

Source: Link