The artificial intelligence landscape is witnessing a seismic shift, and at its epicenter is Cerebras Systems, a Silicon Valley innovator whose recent blockbuster IPO has sent ripples through the tech world. Far from being just another market debut, Cerebras’s monumental entry signals an insatiable global demand for advanced AI processing power, particularly for alternatives to Nvidia’s dominant, yet often scarce and costly, GPUs.

Cerebras: A New Titan in AI Hardware

Cerebras’s initial trading day on Wall Street saw its market capitalization soar to just under $100 billion, placing it in an elite club alongside tech giants like Meta and Alibaba. While its stock saw a 10% dip on its first full day of trading, the underlying message is clear: the race for AI supremacy is intensifying, and specialized hardware is key.

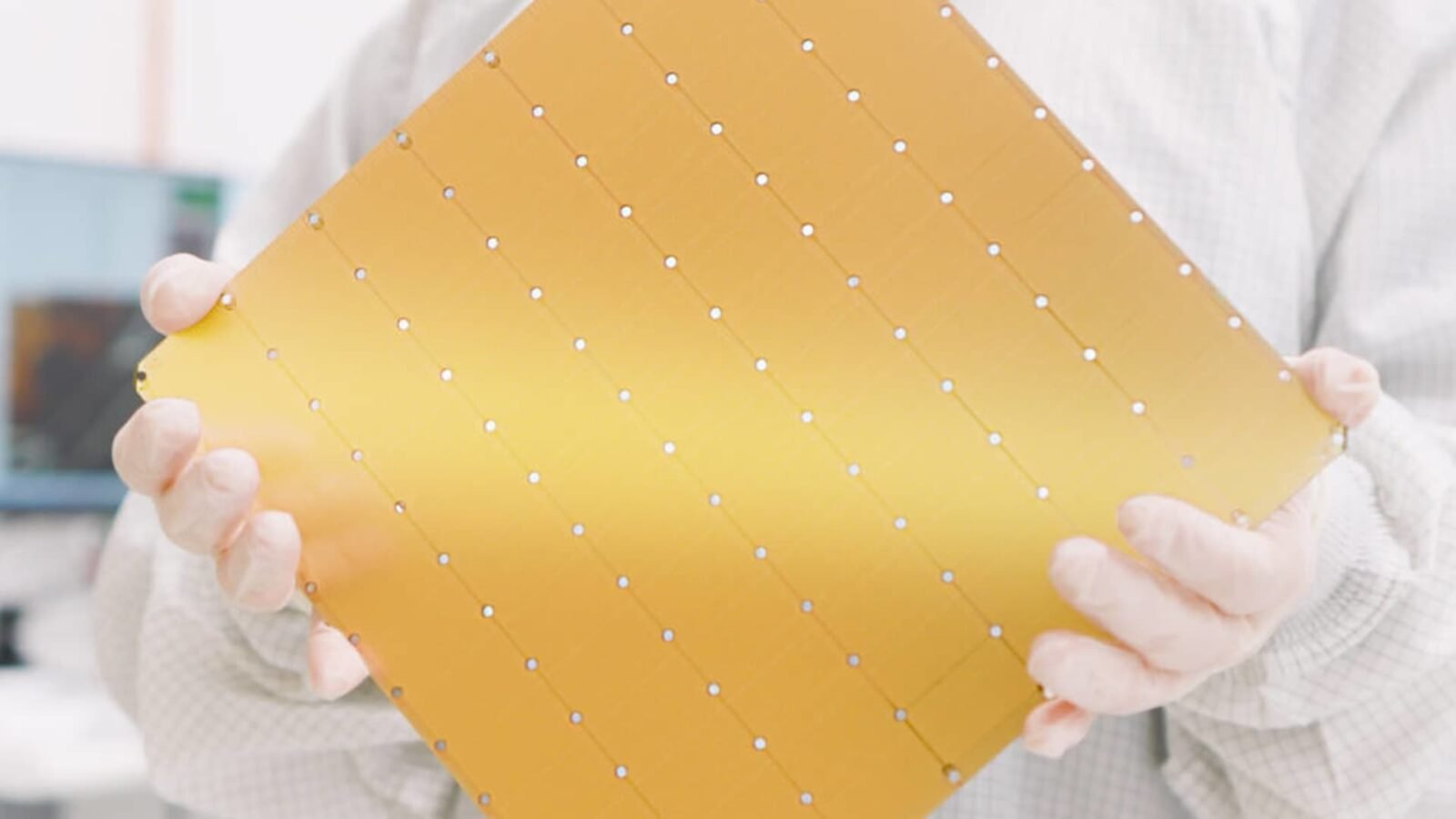

The Dinner-Plate Sized Difference: WSE-3 ASICs

What sets Cerebras apart from the conventional GPU architecture championed by Nvidia? Its custom-built Application-Specific Integrated Circuits (ASICs), specifically the groundbreaking WSE-3. Unlike general-purpose GPUs, Cerebras’s chips are colossal – literally the size of a dinner plate. “We build the biggest chips in the semiconductor industry,” Cerebras CEO and Co-Founder Andrew Feldman proudly stated. This immense scale allows them to process vast amounts of information with unparalleled speed, delivering results more rapidly than their counterparts.

For years, Nvidia’s GPUs have been the workhorses of AI training, excelling at the parallel computations required to teach large models from massive datasets. However, the AI paradigm is evolving. We are now entering the era of “agentic AI,” where the focus shifts from training to inference – the process by which AI models apply their learned knowledge to make real-time decisions based on new information. This is where Cerebras’s specialized ASICs shine.

The WSE-3 boasts staggering specifications: it’s 57 times larger than the biggest GPU and packs 50 times the number of transistors. While cutting-edge AI chips often rely on Taiwan Semiconductor Manufacturing’s (TSMC) most advanced 2-nanometer process, Cerebras’s chip is produced on TSMC’s still highly sophisticated 5-nanometer node, a testament to its engineering prowess.

From Chip Sales to Cloud Services: A Strategic Pivot

Founded in 2016, Cerebras initially aimed to sell its revolutionary chips directly to companies. However, the company has strategically pivoted, now primarily operating its chips within its own data centers as a cloud service. This move positions Cerebras as a direct competitor to established cloud providers such as Google, Microsoft, Oracle, and CoreWeave, offering specialized AI compute power on demand.

This shift has already yielded significant partnerships. In January, Cerebras announced a substantial $20 billion cloud deal with OpenAI, set to run until 2028. Further solidifying its market presence, Amazon Web Services (AWS) revealed in March that it is integrating Cerebras chips into its data centers. The demand is so high that Cerebras CFO Bob Komin noted, “For our fast inference product, there’s so much demand that our biggest challenge is actually trying to supply it. We are adding as much manufacturing and data center capacity as we possibly can, and we’re still sold out into 2027.”

The Expanding AI Chip Ecosystem: Competitors and Collaborators

While hyperscalers like Google and Amazon develop their own in-house ASICs, Cerebras’s closest rivals are firms specializing in creating these custom chips for others. Groq, for instance, recently made headlines when Nvidia acquired its technology for $20 billion, subsequently announcing custom Groq Language Processing Units. This highlights Nvidia’s recognition of the growing importance of specialized inference hardware.

Other formidable players in this burgeoning market include SambaNova and D-Matrix. SambaNova, backed by Intel and serving clients like Hugging Face and Meta with its SN50 chips, demonstrates the broad industry interest in alternative AI hardware. Cerebras’s successful IPO is also expected to pave the way for other custom ASIC startups eyeing public offerings, such as South Korean chipmaker Rebellions, which recently secured $400 million from investors including Samsung.

The “wild IPO” of Cerebras Systems is more than just a financial event; it’s a powerful indicator of the AI industry’s relentless pursuit of specialized, high-performance computing solutions. As agentic AI continues to evolve, companies like Cerebras are not just competing with Nvidia; they are actively shaping the future of artificial intelligence itself, one dinner-plate sized chip at a time.

For more details, visit our website.

Source: Link

Leave a comment