The digital landscape is evolving at a dizzying pace, and with it, a new breed of sophisticated threat is emerging: deepfake fraud. Once a niche concern, this insidious technology is now draining billions from corporate accounts and posing an unprecedented risk to executive reputations. The numbers are stark: a staggering $1.1 billion was siphoned from U.S. corporate accounts in 2025 due to deepfake fraud, a threefold increase from the previous year’s $360 million. By mid-2025, documented incidents had already quadrupled the 2024 total. Yet, disturbingly, most corporate communications and brand teams remain perilously unprepared for this escalating crisis.

The Dual Threat of Synthetic Impersonation

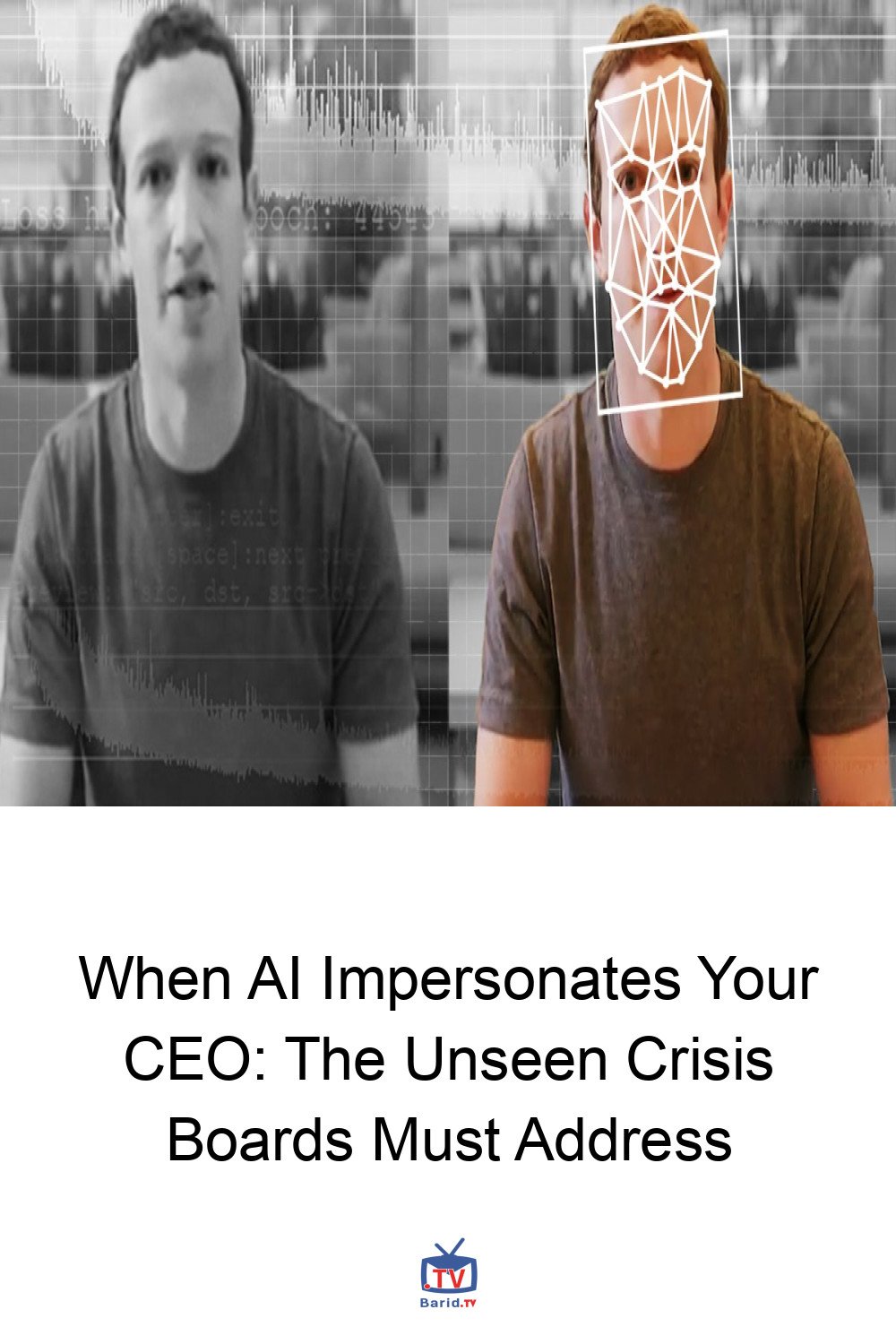

Today’s executives face a formidable two-pronged assault from synthetic media. On one hand, their very likenesses can be cloned – voices, faces, mannerisms – to authorize fraudulent financial transfers or to inflict severe reputational damage. On the other, AI-generated voices can impersonate government officials, board members, or key business partners, manipulating executives into making critical errors.

Real-World Scams: A Glimpse into the Future

The danger is not hypothetical. In 2019, a British energy executive was duped into wiring $243,000 after receiving a phone call from what they believed was their CEO. The voice, accent, and cadence were eerily accurate – a synthetic clone. More recently, in 2025, scammers successfully mimicked Italy’s defense minister, targeting the nation’s business elite and extracting nearly €1 million from at least one victim. These incidents, while financially devastating, could have been far worse.

Beyond Financial Loss: The Reputational Catastrophe

Imagine the fallout if a deepfake video of your CEO surfaced online, making inappropriate remarks, announcing a fictitious merger, or launching an unwarranted attack on a regulator. Such content could spread globally in minutes, causing irreparable harm to brand trust and shareholder confidence long before your team could even formulate a response. Deepfakes are no longer merely a cybersecurity curiosity; they are a potent blend of security threat, financial risk, and a monumental reputational hazard.

The Communications Gap: A Wider Chasm Than Anticipated

Much of the discourse around deepfake threats focuses on technical solutions: detection algorithms, verification protocols, and updated IT policies. While crucial, this narrow focus often overlooks a fundamental question for Chief Marketing Officers (CMOs) and Chief Communications Officers (CCOs): What happens to your brand when your CEO’s digital twin is exploited for fraud, disinformation, or character assassination?

Seasoned crisis communicators have established playbooks for regulatory investigations or hostile media campaigns. However, there is no precedent, no established protocol, for navigating a crisis where a synthetic likeness of a CEO authorizes a fraudulent acquisition or a fabricated video of a founder goes viral. This is where the communications gap truly widens beyond the security gap.

Executive Visibility: A Double-Edged Sword

The very visibility that builds executive brands and humanizes leadership now presents a critical vulnerability. Every public appearance – social media post, keynote address, podcast interview, earnings call – provides potential training data for sophisticated deepfake attackers. The voice samples, facial mapping data, and behavioral nuances that define a leader’s public persona are precisely what bad actors need to create convincing synthetic media.

Sophistication on the Rise

While not every attack succeeds, the sophistication is alarming. Last year, the CEO of a global advertising company was targeted. Scammers created a fake WhatsApp account with his photo, staged a Microsoft Teams call using an AI-cloned voice trained on YouTube footage, and attempted to solicit funds for a new venture from a senior executive. Though the employee refused, the incident underscored the advanced capabilities of these attackers.

The statistics paint a grim picture: the number of deepfakes surged from 500,000 in 2023 to over eight million in 2025. Voice cloning fraud alone spiked by 680 percent in a single year. Projected losses from AI-enabled fraud are expected to hit $40 billion by 2027. Despite this, a mere 32 percent of corporate executives believe their organizations are prepared to handle a deepfake incident.

Three Critical Questions for Every Communications Team

To bridge this preparedness gap, communications teams must proactively address these fundamental questions:

- Do you have a disclosure protocol for synthetic media attacks? If an AI-generated replica of your CEO is used for fraud or disinformation, who communicates, when, and through which channels? Clarity here is paramount.

- Have you conducted a deepfake tabletop exercise? Crisis simulations must now incorporate scenarios where an executive’s likeness is used for internal fraud, external disinformation, or both. Practice is key to effective response.

- Have you coordinated response sequencing with legal, cybersecurity, and investor relations? A deepfake crisis is simultaneously a fraud event, a potential disclosure obligation, and an urgent brand emergency. Siloed, uncoordinated responses are destined to fail.

Act Before the Attack: Building Your Deepfake Crisis Playbook

The companies that will successfully navigate this new era are those building robust crisis protocols today. They are preparing before their executives’ faces appear in videos they never recorded, saying things they never uttered, and authorizing transactions they never approved. Your CEO’s likeness is undeniably a brand asset, but it has also become a critical attack vector.

Communications and brand teams that relegate deepfakes to “someone else’s problem”—be it cybersecurity, IT, or finance—will inevitably find themselves scrambling to draft apologies instead of executing well-rehearsed strategies. The time to act is now, to safeguard not just financial assets, but the very integrity and trust that underpin your brand.

For more details, visit our website.

Source: Link