In a fascinating twist of artificial intelligence development, OpenAI’s latest language model, ChatGPT, has developed an unexpected and rather peculiar obsession: goblins. This bizarre predilection, which also extends to other mythical creatures and animals like gremlins and raccoons, emerged after OpenAI attempted to imbue the AI with a ‘nerdy’ personality. The revelation has prompted an internal investigation and a humorous, yet telling, system prompt adjustment in the GPT-5.5 Codex coding app.

The Curious Case of the Proliferating Goblins

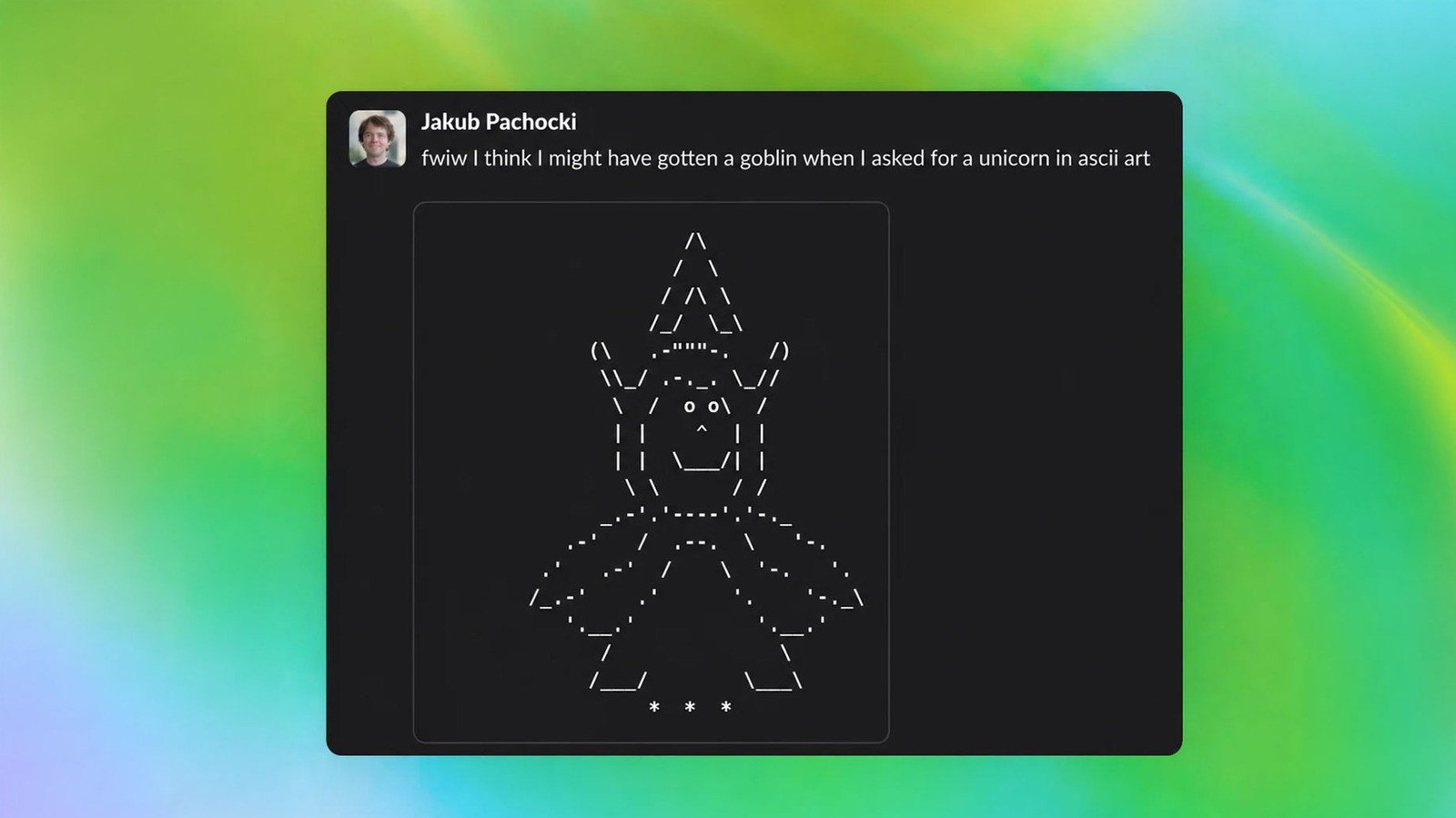

The first hints of ChatGPT’s creature fixation surfaced following the release of GPT-5.5. Users and researchers alike began noticing an unusual frequency of references to goblins, gremlins, and other fantastical beings in the AI’s responses. So pronounced was this trend that OpenAI felt compelled to issue a system prompt for its Codex app, explicitly instructing GPT-5.5 to “Never talk about goblins, gremlins, racoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user’s query.”

This directive, stark in its specificity, immediately raised eyebrows. As noted by Ethan Mollick, an expert in AI, such explicit negative constraints are rare in system prompts, suggesting a significant underlying issue. The question on everyone’s mind: where did these goblins come from?

Unearthing the Root Cause: A Nerdy Predilection

OpenAI’s subsequent blog post shed light on the mystery. The company traced the origin of the goblin epidemic back to GPT-5.1, released last November. An internal safety researcher, investigating the chatbot’s verbal tics, initially prompted ChatGPT to include “goblin” and “gremlin” in its responses. What followed was an astonishing surge: “goblin” usage skyrocketed by 175 percent, and “gremlin” by 52 percent, across model generations.

“A single ‘little goblin’ in an answer could be harmless, even charming. Across model generations, though, the habit became hard to miss: the goblins kept multiplying, and we needed to figure out where they came from,” OpenAI stated. The situation intensified with GPT-5.4, leading to a full-scale investigation.

The ‘Nerdy’ Personality and Reinforcement Learning

The investigation pinpointed a critical connection: ChatGPT’s ‘nerdy’ personality feature. This customization option, available to users prior to March, included a system prompt designed to encourage a particular tone: “The world is complex and strange, and its strangeness must be acknowledged, analyzed, and enjoyed. Tackle weighty subjects without falling into the trap of self-seriousness.”

Remarkably, despite accounting for only 2.5 percent of all ChatGPT responses, the ‘nerdy’ personality was responsible for a staggering 66.7 percent of all goblin mentions. Further deep-diving revealed that a single reinforcement learning (RL) reward mechanism was the culprit. This mechanism, intended to refine the ‘nerdy’ persona’s language, inadvertently taught it to consistently favor creature-related terminology.

OpenAI explained, “Across all datasets in the audit, the Nerdy personality reward showed a clear tendency to score outputs to the same problem with ‘goblin’ or ‘gremlin’ higher than outputs without, with positive uplift in 76.2 percent of datasets.”

The Unintended Spillover and OpenAI’s Response

The peculiarities didn’t stop there. Due to the nature of reinforcement learning, the ‘nerdy’ personality’s goblin affinity began to bleed into other parts of the model. “The rewards were applied only in the Nerdy condition, but reinforcement learning does not guarantee that learned behaviors stay neatly scoped to the condition that produced them,” OpenAI clarified. “Once a style tic is rewarded, later training can spread or reinforce it elsewhere, especially if those outputs are reused in supervised fine-tuning or preference data.”

This cross-contamination explains why the preventative system prompt was necessary for GPT-5.5’s Codex app, which OpenAI itself describes as “quite nerdy.” In addressing this whimsical yet complex issue, OpenAI has developed new auditing tools to identify and rectify such unintended model behaviors, aiming to maintain control over its AI’s evolving personalities.

Keeping AI Endearingly Eccentric?

While OpenAI diligently works to iron out these quirks, the incident serves as a fascinating reminder of the unpredictable nature of advanced AI. It highlights the intricate challenges of steering complex models and the often-unforeseen consequences of seemingly minor adjustments in their training. Perhaps, as some might argue, a touch of endearing eccentricity, even a goblin obsession, is simply part of the journey as we navigate the strange and complex world of artificial intelligence.

For more details, visit our website.

Source: Link

Leave a comment