The AI Tsunami: From Drive-Thrus to Digital Sculpting

Artificial intelligence is no longer a distant concept; it’s woven into the fabric of our daily lives, from mundane tasks like taking drive-thru orders (sometimes with comical failures) to revolutionizing complex creative processes. While large language models like ChatGPT capture headlines, a specialized frontier is rapidly expanding: the generation of intricate 3D models using AI. For enthusiasts in the 3D printing and digital design communities, this emerging capability is nothing short of transformative.

A Personal Odyssey into AI-Generated 3D

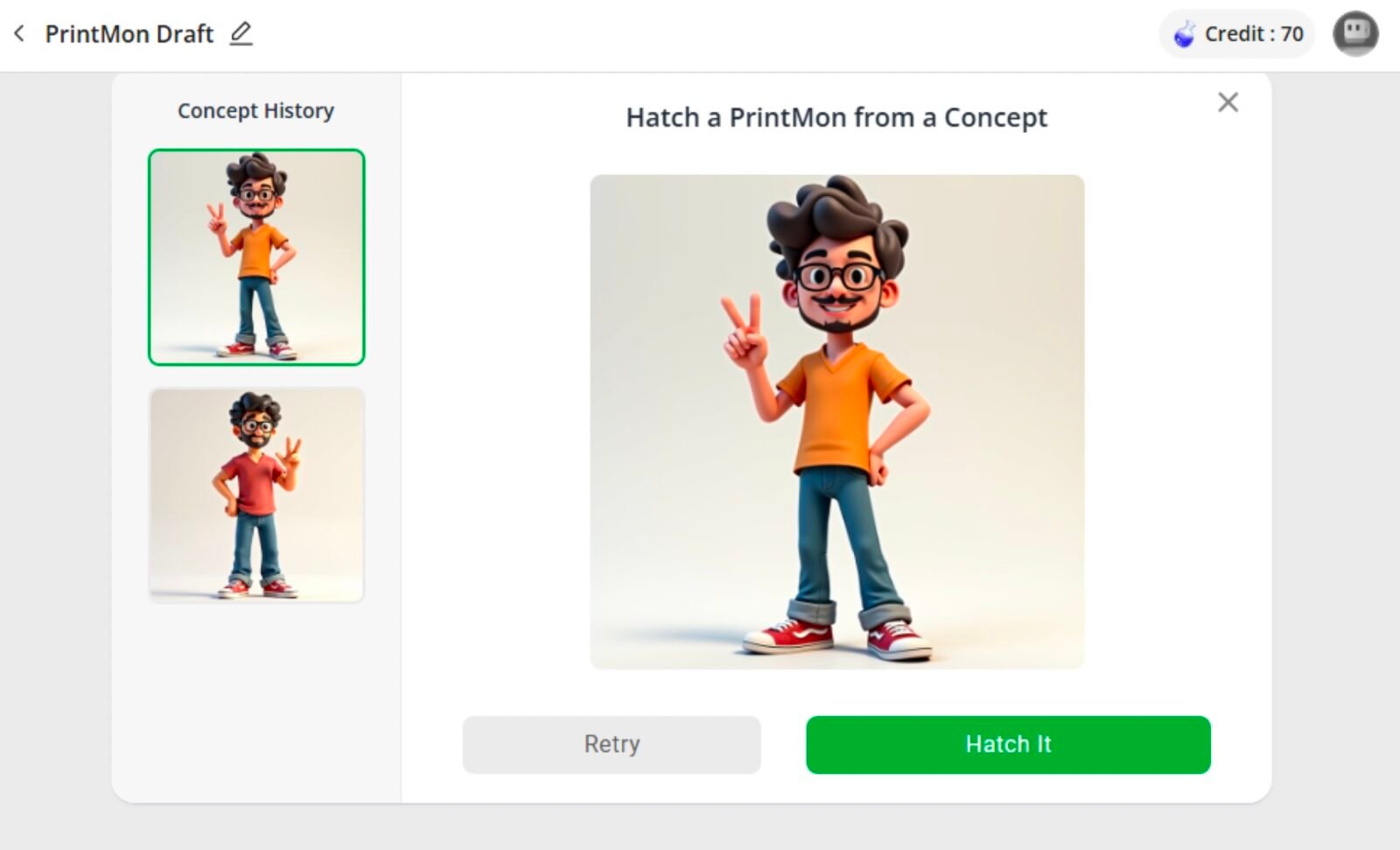

The journey into AI-powered 3D modeling began subtly. I recall Make: Magazine friend Andrew Sink’s early triumph: coaxing ChatGPT to produce a rudimentary STL file of a cube. It was imperfect, yet undeniably workable – a simple cube, but a significant first step. My own deep dive commenced in late 2024, and in just a single year, the progress has been breathtaking. I embarked on an experiment, using a consistent prompt and a photograph of myself to test various AI models. The prompt described: “A man with medium length, curly hair, a short beard and mustache, rounded rectangle glasses (no lenses), jeans and a V neck t-shirt. He has one hand on his hip and he’s giving a peace sign with the other. He is wearing Vans style tennis shoes.” Here’s what I discovered.

Meshy: A Rapid Evolution in Digital Likeness

My first stop was Meshy.ai, a platform offering both text-to-3D and photo-to-3D model generation. My experience over the past year highlights its remarkable evolution.

2024: Early Forays and Mixed Realities

A year ago, as a free user, I received 200 monthly credits, with each model consuming 10 credits. The system allowed one low-priority task in the queue, and all generated models were under the CC BY 4.0 license, requiring attribution to Meshy. The results were, to put it mildly, mixed. Much like early image-generating AIs, Meshy had its own unique interpretation of requests. Text-based prompts often yielded cartoonish figures, while photograph-based attempts aimed for realism but frequently missed the mark on likeness, sometimes producing what could only be described as a ‘blob’ rather than a person. It would generate four iterations, allowing me to pick one to refine.

2025: Sharper Focus, Uncanny Valleys

Today, Meshy presents a more refined experience. New users now receive 100 credits, and tasks consume 20 credits. The platform now prompts for preferred styles (I chose ‘characters’ and ‘cartoon’). Despite this, my text prompt for a realistic man delivered a single, realistic model. While it didn’t capture my likeness (as I hadn’t requested it), it followed the prompt accurately. Zooming in reveals slightly blurred features, but for a small 3D-printed action figure, it would be quite passable. The photograph-generated bust, however, was significantly more photorealistic, yet undeniably veered into the uncanny valley. The progress in just one year is undeniable.

Rodin Diffusion: Pushing the Boundaries of Detail

Next, I explored Rodin Diffusion, hosted on the Hyper3D website. This platform also operates on a credit system with paid tiers, and its development has been equally swift.

2024: Promising Starts, Peculiar Additions

Upon signing up, new users received 5 credits, with each model costing 0.5 credits (a discounted rate, likely for new users). Rodin’s text-based results were inconsistent. As a free user, I was limited by polygon count, which impacted detail. The AI often added unsolicited elements, like a watch, and struggled significantly with the peace sign gesture. Surprisingly, the photograph-generated bust was far better than expected, bearing a striking resemblance to me despite the polygon restrictions. The ability to request multiple regenerations before committing to the final model file was a valuable feature, and the final output was noticeably smoother and clearly 3D printable.

2025 (Gen 2): Ambitious, Yet Imperfect Creations

Rodin Diffusion’s latest iteration, Gen 2, is a testament to its ambitious development. The text prompt immediately generated a small preview image of the described man. While far from clean upon closer inspection, this image serves as a source for the main generation. Hitting the ‘Generate’ button provides a free preview before credits are used. The features are more defined than Meshy’s, but accuracy remains a challenge. The peace sign was almost alien-like, and the model appeared to be holding a donut alongside it. Most notably, an entire extra arm was present. While the generated image clearly influenced these odd effects in the 3D model, repeated ‘Redo’ attempts didn’t resolve the issues. Despite these quirks, Rodin Gen 2 offers a robust starting point for those willing to import and refine models in professional software like Blender or Nomad Sculpt.

The Road Ahead: AI’s Unfinished Masterpiece

My year-long experiment underscores the incredible pace of innovation in AI-powered 3D model generation. From simple cubes to complex, albeit sometimes flawed, human figures, these tools are rapidly evolving. While current limitations include occasional anatomical anomalies and the ‘uncanny valley’ effect, the potential for artists, designers, and hobbyists is immense. As these AI models continue to learn and refine their capabilities, the dream of effortlessly generating intricate, high-quality 3D models moves ever closer to reality, promising to democratize digital sculpting and accelerate creative workflows.

For more details, visit our website.

Source: Link

Leave a comment