OpenAI’s Codex Security: An AI Sentinel Uncovering Thousands of Critical Software Flaws

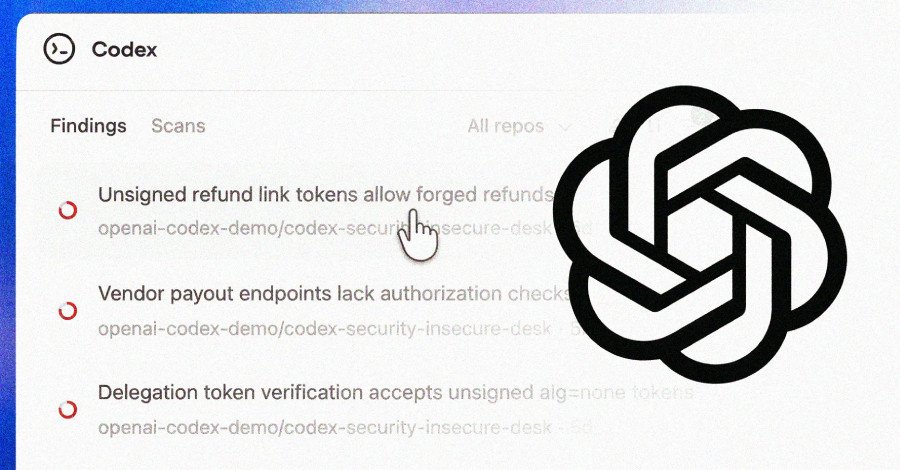

In a significant stride for software security, OpenAI has officially launched Codex Security, an advanced artificial intelligence (AI)-powered agent engineered to autonomously detect, validate, and propose fixes for vulnerabilities within codebases. This groundbreaking feature is now available in a research preview for ChatGPT Pro, Enterprise, Business, and Edu customers, offering a month of complimentary usage via the Codex web platform.

Revolutionizing Vulnerability Detection with Deep Context

OpenAI touts Codex Security as a sophisticated tool that ‘builds deep context about your project to identify complex vulnerabilities that other agentic tools miss.’ This deep contextual understanding allows it to surface high-confidence findings, providing actionable fixes that genuinely enhance system security while filtering out the ‘noise of insignificant bugs.’

Codex Security marks a substantial evolution from Aardvark, OpenAI’s previous security offering unveiled in private beta in October 2025. Aardvark aimed to empower developers and security teams with scalable vulnerability detection and remediation. The latest iteration, Codex Security, significantly amplifies these capabilities.

Impressive Early Performance: A Deep Dive into the Numbers

The beta phase of Codex Security yielded remarkable results. Over the past 30 days, the AI agent meticulously scanned more than 1.2 million commits across various external repositories. This extensive analysis led to the identification of an astonishing 792 critical findings and 10,561 high-severity vulnerabilities.

These critical flaws were discovered in a diverse array of prominent open-source projects, including OpenSSH, GnuTLS, GOGS, Thorium, libssh, PHP, and Chromium. Specific examples of identified vulnerabilities include:

- GnuPG: CVE-2026-24881, CVE-2026-24882

- GnuTLS: CVE-2025-32988, CVE-2025-32989

- GOGS: CVE-2025-64175, CVE-2026-25242

- Thorium: CVE-2025-35430, CVE-2025-35431, CVE-2025-35432, CVE-2025-35433, CVE-2025-35434, CVE-2025-35435, CVE-2025-35436

The Mechanics of AI-Powered Security

According to OpenAI, Codex Security’s prowess stems from its ability to leverage the advanced reasoning capabilities of its frontier models, combined with sophisticated automated validation. This dual approach is crucial for minimizing the risk of false positives and ensuring that the proposed fixes are truly actionable.

OpenAI’s internal testing has shown a consistent increase in precision and a dramatic decline in false positive rates, with the latter dropping by over 50% across all scanned repositories. This improvement in signal-to-noise is achieved by grounding vulnerability discovery in the system’s context and rigorously validating findings before presenting them to users.

A Three-Stage Approach to Remediation

The agent’s operational workflow is structured into three distinct, yet interconnected, stages:

- System Analysis & Threat Modeling: Codex Security first analyzes a repository to grasp the project’s security-relevant structure. It then generates an editable threat model, outlining the system’s functions and its most exposed areas.

- Vulnerability Identification & Validation: Using the established system context as a foundation, the agent identifies potential vulnerabilities. These findings are then classified based on their real-world impact and rigorously pressure-tested in a sandboxed environment for validation. OpenAI emphasizes that when configured with a tailored environment, Codex Security can validate issues directly within the running system, further reducing false positives and generating working proofs-of-concept for security teams.

- Intelligent Fix Proposal: In the final stage, Codex Security proposes fixes that are meticulously aligned with the system’s behavior. This thoughtful approach aims to minimize regressions and streamline the review and deployment process for developers.

The Evolving Landscape of AI in Cybersecurity

The introduction of Codex Security comes hot on the heels of Anthropic’s Claude Code Security launch, a similar offering designed to assist users in scanning codebases for vulnerabilities and suggesting patches. This parallel development underscores a growing trend: the increasing integration of advanced AI into the cybersecurity domain, promising a future where software is not only built faster but also secured more intelligently.

As AI continues to mature, tools like Codex Security are poised to become indispensable assets for developers and security professionals, shifting the paradigm from reactive bug fixing to proactive, context-aware vulnerability management.

For more details, visit our website.

Source: Link

Leave a comment