When AI Impersonates: Grammarly’s ‘Expert Review’ Under Fire

In an era where artificial intelligence increasingly permeates our digital lives, the ethical boundaries of its application are constantly being tested. A recent revelation concerning Grammarly’s ‘Expert Review’ feature has ignited a significant debate, exposing a troubling practice: the alleged unauthorized use of prominent individuals’ identities to generate AI-driven writing suggestions. What began as a seemingly innovative tool has quickly spiraled into a controversy, raising serious questions about consent, accuracy, and the very definition of ‘expertise’ in the age of AI.

The ‘Expert Review’ Feature: Inspired or Impersonated?

Launched in August, Grammarly’s ‘Expert Review’ feature promises to help users ‘sharpen your message through the lens of industry-relevant perspectives.’ The premise is straightforward: select the feature, and AI agents analyze your writing, offering suggestions ‘inspired by’ a roster of subject matter experts. This list includes luminaries like Stephen King, Neil deGrasse Tyson, and Carl Sagan. However, as first reported by Wired and subsequently corroborated by The Verge, this list extends to living, active professionals – many of whom were entirely unaware of their inclusion.

Journalists Unwittingly Become AI ‘Experts’

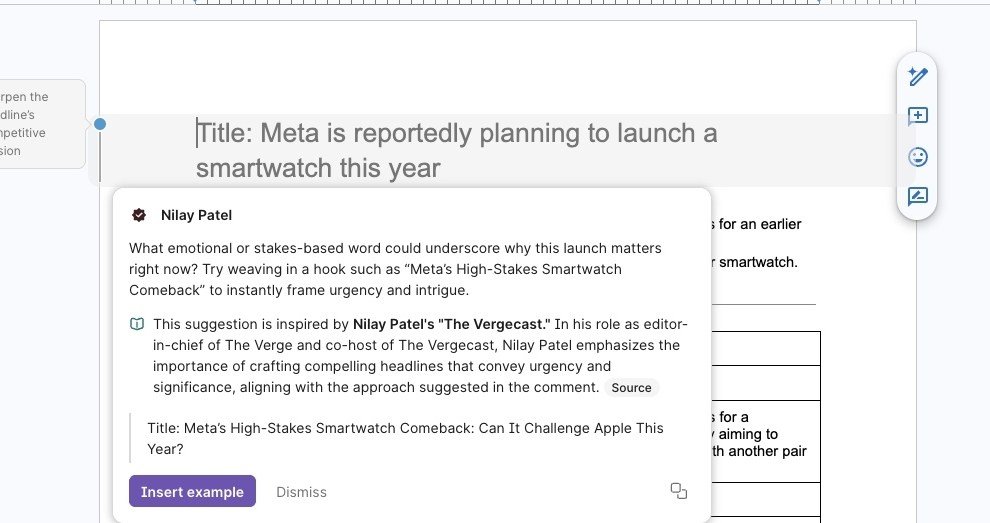

The Verge’s news writer, Stevie Bonifield, discovered a particularly alarming aspect of this feature: several of her colleagues, including editor-in-chief Nilay Patel, editor-at-large David Pierce, and senior editors Sean Hollister and Tom Warren, were listed as ‘experts.’ None of these individuals had granted Grammarly, or its parent company Superhuman, permission for their identities to be used in this capacity. The investigation further uncovered numerous other tech journalists, past and present, from various reputable publications like Wired, Bloomberg, The New York Times, and The Atlantic, whose names appeared in the feature without their consent.

Inaccuracies and Outdated Information

Beyond the fundamental issue of consent, the ‘expert’ profiles themselves contained inaccuracies. Outdated job titles were common, suggesting a lack of direct engagement or verification from Superhuman. Had the company sought permission, these details could have been easily updated, highlighting a missed opportunity for accuracy and transparency.

Superhuman’s Defense: Publicly Available Works

In response to the mounting concerns, Alex Gay, Vice President of Product and Corporate Marketing at Superhuman, issued a statement to The Verge. Gay asserted that the ‘Expert Review’ agent ‘doesn’t claim endorsement or direct participation from those experts; it provides suggestions inspired by works of experts and points users toward influential voices whose scholarship they can then explore more deeply.’ When pressed on whether Superhuman considered notifying or requesting permission from the named individuals, Gay reiterated that ‘The experts in Expert Review appear because their published works are publicly available and widely cited.’

A Flawed Implementation: Beyond the Ethics of Consent

While Superhuman defends its practice by citing the public availability of works, the execution of the ‘Expert Review’ feature itself appears deeply flawed, undermining any claim of providing genuine, reliable guidance.

Broken Links and Misleading Sources

Attempts to ‘explore more deeply’ into the experts’ work often led to dead ends. The feature was plagued by frequent crashes, and its ‘sources’ linked to spammy copies of legitimate websites, archived pages that weren’t the original source, or, in some egregious cases, entirely unrelated content not authored by the attributed expert. This raises a critical question: if the sources are unreliable or incorrect, how can the AI’s ‘inspiration’ be trusted?

Simulating Expertise: The Illusion of Human Editing

Perhaps most concerning is the misleading presentation of these AI-generated suggestions. Within platforms like Google Docs, these ‘expert’ comments mimic the appearance of real user feedback, creating an illusion of direct, personalized editing from the named professional. This simulation blurs the line between AI assistance and genuine human interaction, potentially leading users to believe they are receiving advice directly informed by the specific expert’s nuanced understanding.

The Limits of AI ‘Inspiration’

The article highlights a stark example: a suggestion attributed to Verge senior editor Sean Hollister proposed adding a redundant parenthetical. However, as the author notes from personal experience, the real Sean Hollister prioritizes concise, straightforward communication. This anecdote powerfully illustrates a fundamental limitation: while an AI can ingest vast amounts of text and mimic writing styles, it struggles to replicate the critical judgment, contextual awareness, and editorial philosophy that define a true human expert. A checkmark logo and an ‘expert’ label cannot imbue a bot with genuine editorial wisdom.

The Broader Implications for AI Ethics

The Grammarly ‘Expert Review’ controversy serves as a potent reminder of the ethical tightrope AI developers must walk. Leveraging public identities, even under the guise of ‘inspiration’ from publicly available works, without explicit consent or clear disclosure, risks eroding trust, misrepresenting individuals, and devaluing genuine human expertise. As AI continues to evolve, the industry faces an imperative to prioritize transparency, obtain proper consent, and ensure that its innovations genuinely augment, rather than deceptively simulate, human intelligence and authority.

For more details, visit our website.

Source: Link

Leave a comment